One-Line Summary

By introducing a novel Modularity Score (MoS) metric grounded in cyclomatic complexity and carefully controlling for confounding transformation artifacts across five LLMs (Code Llama 7B/34B, DeepSeekCoder 6.7B/33B, GPT-4o-mini) and two competitive programming benchmarks (APPS, CodeContests), this study reveals that modular code is not a core factor for improving LLM-based code generation -- and that previously reported benefits were likely artifacts of the code transformation process rather than modularity itself.

Background & Motivation

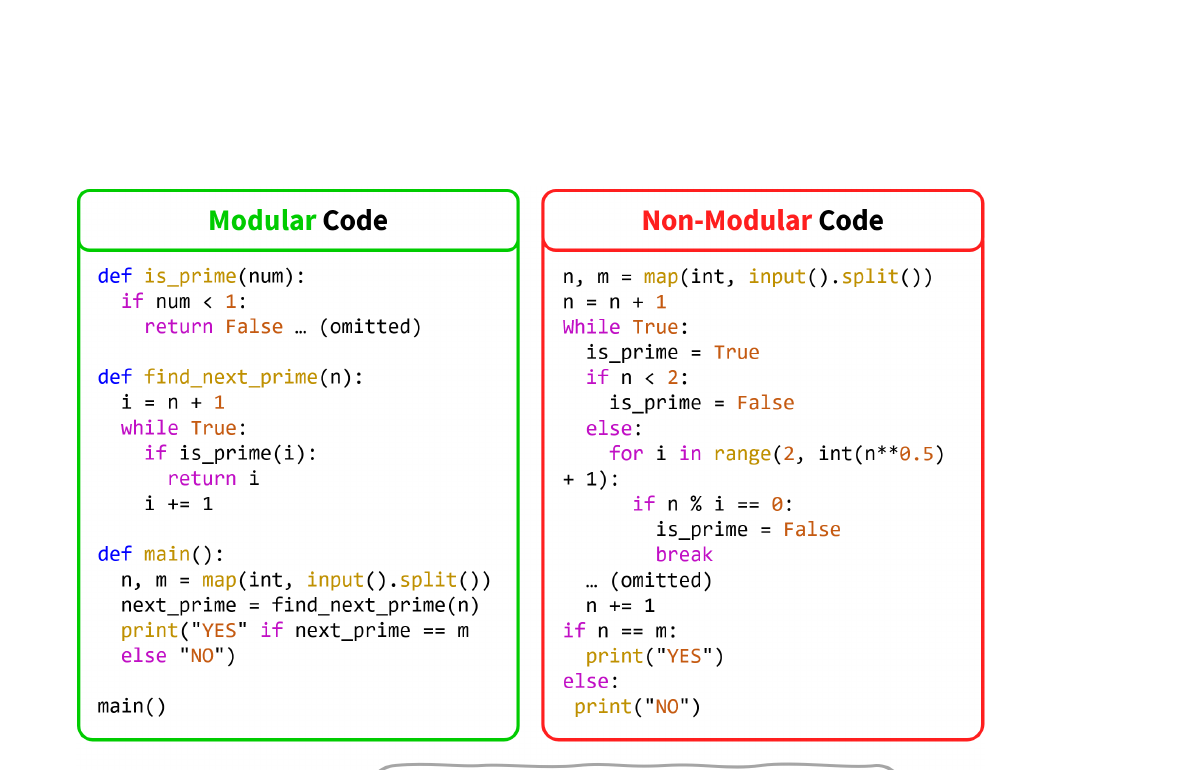

Modular programming -- constructing a final program by integrating smaller, independent building blocks -- has long been regarded as a desirable practice in software development. It reduces complexity, improves maintainability, and enables code reuse. With the rise of code generation agents built upon large language models (LLMs), recent work has naturally adopted this principle, prompting models to decompose problems into helper functions before writing the final solution. But is this traditional practice, designed for human cognition and collaboration, equally effective for these fundamentally different tools?

Conventional Wisdom: Modular code should help LLMs just as it helps human programmers -- by reducing complexity, improving reusability, and making solutions easier to reason about. Several prior studies (e.g., CodeT, Parsel, modular prompting strategies) have reported performance gains from encouraging LLMs to produce modular code. These studies assumed that the benefits of modularity for humans would straightforwardly transfer to language models.

The Problem: Existing studies lack a principled way to measure modularity quantitatively. Without a proper metric, it is impossible to verify whether observed improvements actually stem from modularity itself. Prior work typically compared "modular prompting" vs. "standard prompting," but the modular transformation process introduces confounding changes -- code simplification, reformatting, variable renaming, and even subtle algorithmic changes -- that could independently affect performance.

Core Question: When we rigorously control for confounding factors by introducing a formal modularity metric and carefully designed code categories, does modularity actually improve LLM-based code generation? Or have we been attributing performance gains to the wrong cause?

Proposed Method

The authors introduce a quantitative framework for measuring and evaluating the impact of code modularity on LLM performance. The framework consists of three components: a formal modularity metric, a controlled experimental design with four code categories, and a comprehensive evaluation protocol.

Experimental Results

In-Context Learning on CodeContests (pass@1 / pass@10)

| Model | MC | SC | TMC | TSC |

|---|---|---|---|---|

| Code Llama 7B | 1.98 / 8.02 | 2.58 / 8.81 | 2.57 / 10.18 | 4.35 / 10.67 |

| Code Llama 34B | 4.11 / 12.78 | 5.83 / 14.10 | 3.39 / 13.55 | 5.61 / 15.32 |

| DeepSeekCoder 6.7B | 5.30 / 12.78 | 7.15 / 16.27 | 8.02 / 17.88 | 8.19 / 17.79 |

| DeepSeekCoder 33B | 6.79 / 16.14 | 8.87 / 20.50 | 9.38 / 22.74 | 8.78 / 22.09 |

| GPT-4o-mini | 14.07 | 15.35 | 14.29 | 14.40 |

In-Context Learning on APPS (pass@1 / pass@5)

| Model | MC | SC | TMC | TSC |

|---|---|---|---|---|

| Code Llama 7B | 7.98 / 12.75 | 11.12 / 15.78 | 14.67 / 19.63 | 13.84 / 17.15 |

| DeepSeekCoder 6.7B | 24.76 / 32.59 | 28.93 / 36.26 | 34.26 / 40.74 | 33.24 / 39.73 |

Fine-tuning on CodeContests (pass@1 / pass@10)

| Model | MC (Fine-tuned) | SC (Fine-tuned) |

|---|---|---|

| Code Llama 7B | 3.88 / 12.20 | 4.42 / 12.56 |

| DeepSeekCoder 6.7B | 6.06 / 13.82 | 8.73 / 16.16 |

Perplexity Analysis: LLM Preference for Code Style

| Model | PPL(MC) | PPL(SC) |

|---|---|---|

| Code Llama 7B | 2.20 ± 0.57 | 2.40 ± 1.00 |

| Code Llama 34B | 2.02 ± 0.45 | 2.00 ± 0.44 |

| DeepSeekCoder 6.7B | 1.93 ± 0.41 | 2.05 ± 0.63 |

| DeepSeekCoder 33B | 1.89 ± 0.42 | 1.89 ± 0.42 |

Correlation: MoS vs. Performance (CodeContests, 100 samples)

| Model | Pearson | Spearman |

|---|---|---|

| Code Llama 7B | -0.34 (p=0) | -0.31 (p=0) |

| DeepSeekCoder 6.7B | -0.21 (p=0.04) | -0.25 (p=0.01) |

- SC consistently outperforms MC: Across all five models and both benchmarks, singular (non-modular) code outperforms naturally modular code as in-context demonstrations. On APPS, DeepSeekCoder 6.7B achieves 28.93 pass@1 with SC vs. 24.76 with MC -- a gap of over 4 points.

- TMC vs. TSC shows no clear modularity advantage: When comparing transformed code pairs that differ only in modularity (isolating the pure effect), performance gaps are negligible. For example, DeepSeekCoder 6.7B scores 8.02 (TMC) vs. 8.19 (TSC) on CodeContests -- indicating prior reported benefits were artifacts of the transformation process, not modularity itself.

- TMC/TSC both outperform MC: Both transformed variants substantially outperform original modular code, confirming that the transformation process (code reformatting, simplification) -- not modular structure -- drives the improvement previously attributed to modularity.

- Fine-tuning confirms the trend: Even when models are trained exclusively on modular or singular code (~5K problems, 61K examples), SC-trained models outperform MC-trained ones. DeepSeekCoder 6.7B achieves 8.73 vs. 6.06 pass@1 -- a 44% relative improvement for singular code.

- Negative correlation with MoS: Statistical analysis on 100 CodeContests samples reveals a weak negative correlation between modularity score and code generation performance (Pearson: -0.34 to -0.21, both statistically significant), directly contradicting conventional wisdom.

- Perplexity reveals LLM neutrality: All four models show nearly identical perplexity for modular and singular code (PPL ~1.9--2.4 for both). DeepSeekCoder 33B scores exactly 1.89 for both styles. Unlike humans, who strongly prefer modular structure for comprehension, LLMs are genuinely indifferent to code organization.

- GPT-4o-mini is indifferent: The strongest model shows virtually identical performance across all four code types (14.07--15.35 pass@1), suggesting modularity has negligible impact for highly capable models that can handle either style with equal proficiency.

Why It Matters

This work overturns the prevailing assumption that "modular is always better" in LLM-based code generation, delivering critical insights for both researchers and practitioners:

- Transformation artifacts, not modularity: The paper demonstrates that previously reported effectiveness of modularity was likely due to unforeseen side effects of the code transformation process (e.g., simplification, reformatting, variable renaming) rather than modularity itself. The TMC vs. TSC comparison -- the paper's most carefully controlled experiment -- shows negligible differences, providing a critical methodological warning for future research that conflates code transformation with code structure.

- LLMs are not humans: Perplexity analysis reveals that LLMs exhibit nearly identical predictive ability for both modular and singular code (PPL differences within 0.2 across all models), unlike humans who benefit greatly from modular structure for comprehension and maintenance. This reveals a fundamental cognitive gap: factors influencing the usefulness of code examples differ between human and LLM perspectives, and we should not uncritically transfer human software engineering heuristics to AI systems.

- Practical guidance: Practitioners need not invest extra effort in modularizing code demonstrations for LLM-based code generation. The findings suggest that code quality, correctness, and the transformation process's reformatting effects matter more than structural style when constructing few-shot examples or fine-tuning datasets.

- Methodological template: The MoS metric and four-category experimental design provide a reusable template for future studies investigating other code properties (e.g., commenting style, naming conventions, design patterns) and their effect on LLM performance. The approach of isolating confounds through transformation-then-reversal is broadly applicable.