One-Line Summary

A comprehensive NLP benchmark and systematic evaluation of Universal Domain Adaptation (UniDA) methods that must simultaneously adapt to unfamiliar domains and detect anomalous inputs, revealing that UniDA techniques from computer vision can be effectively transferred to natural language tasks while also uncovering that adaptation difficulty -- governed by domain gap and label-space mismatch -- is the decisive factor in model performance.

Background & Motivation

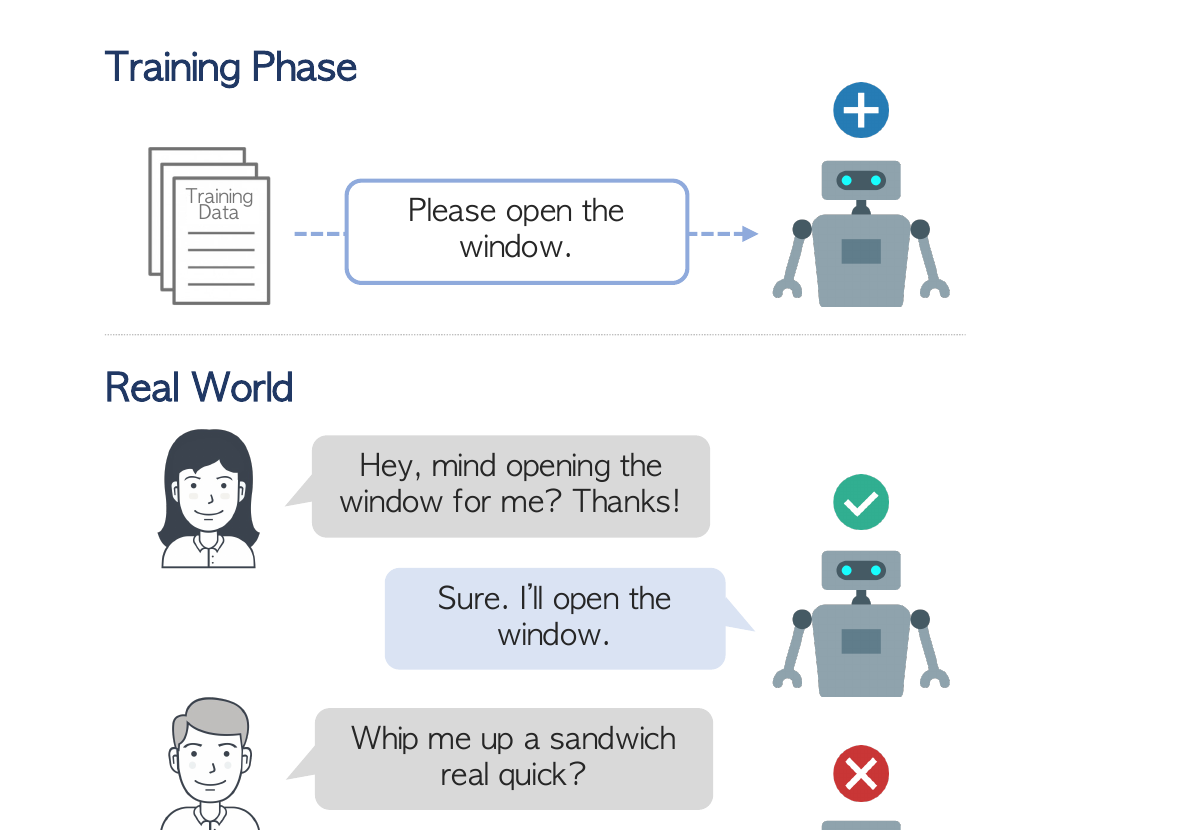

When deploying machine learning systems in the wild, it is highly desirable for them to effectively leverage prior knowledge to the unfamiliar domain while also firing alarms to anomalous inputs. Standard domain adaptation in NLP assumes source and target domains share the same label space, differing only in input distribution (covariate shift). However, real-world scenarios are far more complex -- the target domain may introduce entirely new categories, or some source categories may disappear.

Key Gaps in Existing Research:

- Label space mismatch ignored: Most NLP domain adaptation methods assume identical label sets across domains, but in practice the target domain may contain novel classes (open-set) or lack some source classes (partial). For example, a sentiment classifier trained on electronics reviews may encounter entirely new product categories when deployed on fashion reviews.

- UniDA underexplored in NLP: Universal Domain Adaptation -- which handles the most general setting where label spaces overlap only partially -- has been studied extensively in computer vision (with methods like UAN, CMU, OVANet, and UniOT), but remains largely untested for natural language tasks where features are discrete and domain gaps manifest differently.

- No comprehensive NLP benchmark: Prior work lacks a systematic benchmark that evaluates both generalizability (adapting to new domains) and robustness (detecting unknown classes) across diverse NLP tasks with varying difficulty levels. Existing studies test on individual datasets without controlled comparison across scenarios.

- Temporal shifts neglected: Existing benchmarks rarely incorporate temporal distribution shifts, where the target data comes from a different time period than the source data. In real applications, language use evolves over time, making temporal robustness crucial.

Universal domain adaptation (UniDA) addresses the most general and realistic scenario, requiring models to (1) correctly classify samples from shared classes, (2) detect and reject target-private samples as unknown, and (3) handle both covariate shift and label shift simultaneously. This paper bridges the gap by creating a thorough NLP benchmark spanning multiple tasks and difficulty levels, and systematically validating whether UniDA methods originally designed for images can work for text.

Proposed Method: NLP-UniDA Benchmark & Evaluation Framework

Rather than proposing a single new model, this work contributes a comprehensive benchmark that provides thorough viewpoints of a model's generalizability and robustness, along with a systematic evaluation of existing UniDA and NLP domain adaptation methods. The benchmark is designed with careful attention to adaptation difficulty -- the key variable that determines whether methods succeed or fail.

Experimental Results

The evaluation spans multiple NLP tasks under all four UniDA scenarios, using H-score as the primary metric. Key comparisons include four vision-originated UniDA methods adapted to NLP, standard NLP domain adaptation baselines, and source-only models (no adaptation). The results reveal a nuanced picture of cross-modality transferability.

UniDA Scenarios Evaluated

| Scenario | Source-Private Classes | Target-Private Classes | Shared Classes | Difficulty |

|---|---|---|---|---|

| Closed-Set | None | None | All | Easiest |

| Partial | Yes | None | Subset | Moderate |

| Open-Set | None | Yes | Subset | Moderate |

| Open-Partial | Yes | Yes | Subset | Hardest |

Methods Compared

| Category | Methods | Key Mechanism |

|---|---|---|

| UniDA (Vision) | UAN | Domain-specific sample weighting to down-weight private classes |

| UniDA (Vision) | CMU | Contrastive learning with memory banks for class separation |

| UniDA (Vision) | OVANet | One-vs-all classifiers with per-class acceptance boundaries |

| UniDA (Vision) | UniOT | Optimal transport for distribution alignment with partial overlap |

| NLP DA | Standard NLP baselines | Domain-adversarial training, feature alignment |

| Baseline | Source-Only | No adaptation (direct transfer) |

Key Findings Across Scenarios

- Vision UniDA methods transfer to NLP: UniDA methods originally designed for image inputs can be effectively transferred to the natural language domain. When adapted to use pre-trained language model encoders instead of CNN backbones, methods like OVANet and UniOT achieve meaningful improvements over source-only baselines, validating the cross-modality applicability of the UniDA paradigm.

- Adaptation difficulty is the decisive factor: Performance varies dramatically across datasets depending on the inherent domain gap. On datasets with mild distributional shifts (e.g., between similar product categories in Amazon Reviews), UniDA methods achieve strong H-scores. On datasets with large domain gaps or temporal shifts, even the best methods struggle considerably, with H-score drops of 5-15 percentage points.

- Open-Partial is consistently the hardest scenario: The most general UniDA setting, where both source-private and target-private classes exist, consistently yields the lowest H-scores across all methods and datasets. This highlights the compounding challenge of simultaneously filtering out irrelevant source knowledge while detecting novel target categories.

- Temporal shifts compound the challenge: Datasets with temporal distribution shifts -- where source and target data come from different time periods -- present challenges beyond standard topical domain gaps. Language evolution, topic drift, and shifting class distributions over time cause further performance degradation that existing methods are not well-equipped to handle.

- Unknown detection is the primary bottleneck: Decomposing the H-score reveals that shared-class classification accuracy (ACCshared) is often reasonable, but accurately detecting target-private unknown samples (ACCunknown) remains the critical bottleneck. Most methods either over-reject (misclassifying shared samples as unknown) or under-reject (failing to flag truly novel inputs).

- No single method dominates: No single approach consistently outperforms all others across every dataset-scenario combination. UniOT and OVANet tend to perform well on datasets with clear class structure, while UAN shows advantages when domain gaps are moderate. This suggests that the NLP UniDA problem requires more specialized, task-aware solutions rather than simple transfers from vision.

- NLP-specific challenges emerge: Compared to vision, NLP domain adaptation exhibits unique difficulties: (1) textual domain gaps are often more subtle and harder to measure than visual domain gaps, (2) pre-trained language models already provide strong cross-domain representations that reduce the marginal benefit of adaptation, and (3) class boundaries in text are often more ambiguous, complicating unknown detection.

Why It Matters

This work makes three important contributions that advance the reliability of NLP systems in real-world deployment scenarios:

- First comprehensive NLP UniDA benchmark: By constructing a systematic benchmark with multiple datasets (Amazon Reviews, MNLI, temporal-shift datasets), four canonical UniDA scenarios, and controlled difficulty levels, this work provides the community with a much-needed testbed for evaluating robust domain adaptation in NLP. Prior to this, no unified benchmark existed that systematically tested both generalizability and robustness under label-space mismatch.

- Cross-modality validation of UniDA: The finding that vision-based UniDA methods (UAN, CMU, OVANet, UniOT) can effectively transfer to NLP is significant -- it means the NLP community can build upon a rich body of existing UniDA research from computer vision rather than starting from scratch. At the same time, the results identify where NLP-specific challenges (subtle domain gaps, strong pre-trained representations, ambiguous class boundaries) require new solutions beyond what vision methods provide.

- Adaptation difficulty as a unifying principle: The detailed analysis reveals that adaptation difficulty -- determined by the magnitude of domain gap, severity of label-space mismatch, and presence of temporal shifts -- is the single most important factor governing model performance. This insight provides actionable guidance: practitioners should assess the difficulty of their specific deployment scenario before selecting an adaptation strategy, and future research should focus on methods that can dynamically adjust to varying difficulty levels.

- Roadmap for future research: By identifying unknown detection as the primary bottleneck and temporal shifts as an underexplored challenge, the paper provides concrete directions for advancing NLP UniDA. The benchmark itself serves as a standardized platform for evaluating progress on these open problems.