One-Line Summary

ENGinius is the first large language model specifically designed for plant construction engineering (PCE), built on SOLAR-10.7B with a four-stage bilingual training pipeline and 16.5B tokens of domain data, achieving 67.5% on the Professional Engineer benchmark -- surpassing GPT-4 (64.0%) -- and scoring 58.91 on KOPIA, outperforming all open-source and proprietary baselines by 3-17%.

Background & Motivation

Recent advances in large language models have drawn attention for their potential to automate and optimize processes across various sectors. However, the adoption of LLMs in plant construction engineering (PCE) -- covering oil refineries, power plants, chemical facilities, and large-scale infrastructure -- remains severely limited, mainly due to its highly specialized nature and the lack of resources for domain-specific training and evaluation.

Key Challenges in Plant Construction Engineering:

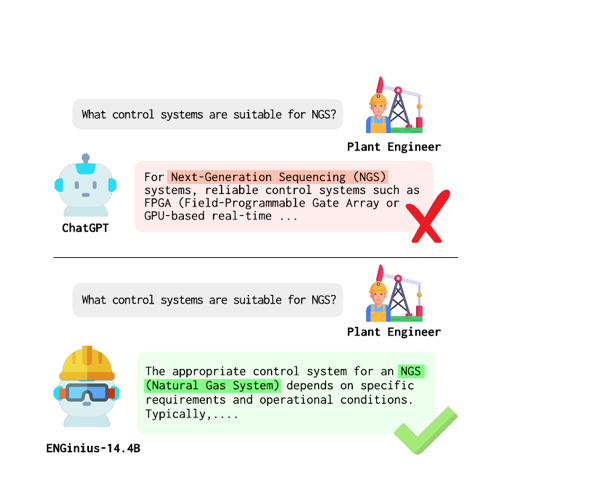

- Highly specialized domain: PCE involves complex technical terminology across mechanical, electrical, piping, civil, architectural, and instrumentation disciplines that general-purpose LLMs fail to handle accurately. For example, ChatGPT's accuracy on PCE-specific acronyms is only 48.4-55.6%, compared to 86-100% on medical, financial, and legal terms.

- Lack of training resources: Unlike medicine or law, PCE has virtually no publicly available domain-specific corpora or instruction datasets for LLM training. Authoritative information is often copyrighted by professional associations and accessible only through subscription-based text search services.

- No evaluation benchmarks: Prior to this work, there were no benchmarks tailored to assess LLM performance on PCE tasks, making it impossible to measure domain competency.

- Bilingual requirements: Korean engineering firms operate globally, requiring seamless Korean-English communication for technical documents, specifications, and cross-border collaboration. Domain-specific language often appears in multilingual or code-switching environments.

ENGinius addresses all of these challenges by presenting end-to-end procedures for domain data construction (16.5B tokens), a multi-stage model training pipeline scaling SOLAR-10.7B to 14.4B parameters, and the first benchmarks (KOPIA and PE) tailored to the plant construction engineering domain.

Proposed Method: Four-Stage Training Pipeline

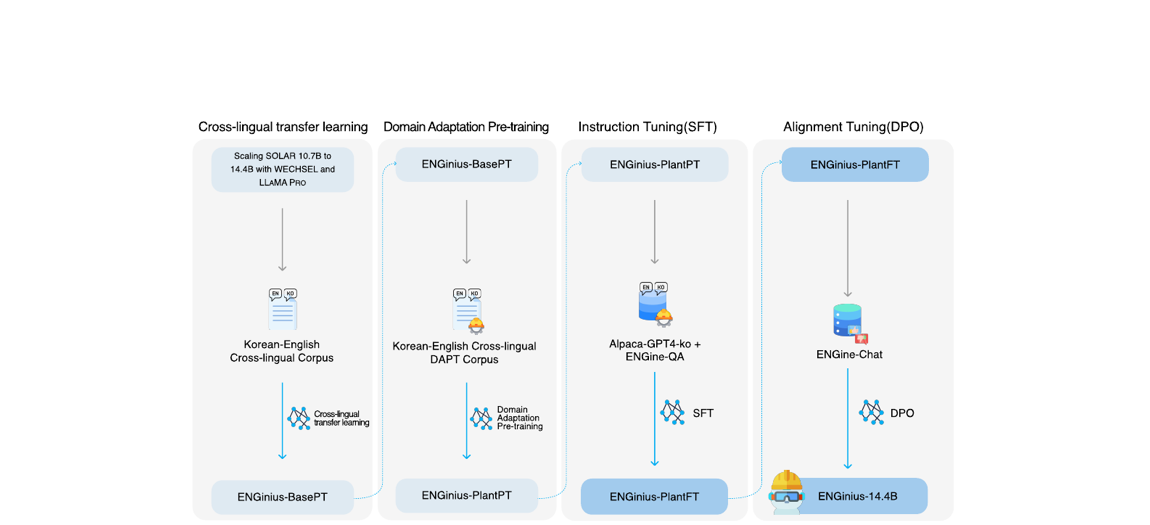

ENGinius employs a four-stage training pipeline that transforms SOLAR-10.7B -- selected after evaluating Llama-2 13B and Mistral 7B for its best balance of model size and cross-lingual adaptability -- into a domain-specialized bilingual model, ultimately producing ENGinius-14.4B.

Domain-Specific Benchmarks: KOPIA and PE

The paper introduces two novel multiple-choice question (MCQ) benchmarks -- the first evaluation tools for plant construction engineering:

- KOPIA Benchmark (Korean): Developed in collaboration with the Korea Plant Industries Association (KOPIA). It covers mechanical and piping engineering with 1,000 expert-validated test questions on terminology, technical standards, and process knowledge. Planned for public release.

- Professional Engineer (PE) Benchmark (English): Based on actual PE certification exams, comprising 80 questions across three categories: PE Code knowledge, PE Calculation (advanced engineering calculations), and PE General (conceptual understanding). A score of ~65 is generally regarded as the passing threshold.

Experimental Results

The authors evaluate ENGinius against general-purpose LLMs using the LLM-as-a-judge framework (LLaMA3-70B as judge), conducting 20 independent runs per model and averaging the top 5 for final scores.

KOPIA Benchmark (Korean, Plant Engineering)

| Model | Mech. | Pipe | Avg. | Diff. from ENGinius |

|---|---|---|---|---|

| Gemma2-9B-it | 58.64 | 59.39 | 57.89 | -2.13 (-3.6%) |

| Orion-14B-Chat | 51.96 | 52.32 | 51.61 | -8.41 (-15.0%) |

| SOLAR-10.7B | 50.65 | 53.13 | 48.17 | -10.12 (-17.2%) |

| ENGinius-14.4B | 60.77 | 62.63 | 58.91 | - |

Professional Engineer (PE) Benchmark (English)

| Model | PE Code | PE Cal | PE General | Average | Diff. from ENGinius |

|---|---|---|---|---|---|

| Orion-14B-Chat | 41.33 | 20.00 | 52.26 | 36.50 | -31.0 (-45.9%) |

| GPT-3.5-turbo | 60.00 | 47.06 | 45.16 | 48.75 | -18.75 (-27.8%) |

| Gemma2-9B-it | 72.00 | 34.71 | 59.99 | 51.50 | -16.0 (-23.7%) |

| SOLAR-10.7B | 72.00 | 40.59 | 54.83 | 52.00 | -15.5 (-23.0%) |

| GPT-4 | 66.67 | 52.94 | 74.84 | 64.00 | -3.5 (-5.2%) |

| ENGinius-14.4B | 100 | 46.47 | 74.84 | 67.5 | - |

- Surpasses GPT-4 on PE: ENGinius-14.4B achieves an average of 67.5 on the PE benchmark, exceeding GPT-4's 64.0 and surpassing the ~65 passing threshold for Professional Engineer certification. Notably, ENGinius achieves a perfect 100 on PE Code, demonstrating exceptional mastery of engineering code knowledge.

- Dominant on KOPIA: ENGinius outperforms all baselines on the Korean KOPIA benchmark by 3-17%, confirming strong bilingual domain competency across both mechanical and piping engineering subcategories.

- GPT-4's edge in calculation: GPT-4 scores higher on PE Calculation (52.94 vs. 46.47), likely due to its stronger mathematical reasoning capabilities -- a trade-off of ENGinius's more compact 14.4B architecture.

- DAPT is critical: Ablation results show ENGinius-PlantPT consistently outperforms ENGinius-BasePT after domain-adaptive pre-training, with KOPIA Pipe scores jumping from 44.85 to 54.36 and PE General from 38.71 to 54.84.

- Each stage contributes: The four-stage pipeline shows cumulative improvements -- bilingual expansion provides the language foundation, DAPT infuses domain knowledge, instruction tuning aligns behavior to practical tasks, and DPO refines output quality based on expert feedback.

Real-World Applications

ENGinius is actively deployed by a major company across real-world PCE workflows, demonstrating tangible industrial impact:

- Expert System: ENGinius functions as a domain expert by retrieving accurate answers to technical questions using Retrieval-Augmented Generation (RAG), referencing internal design standards and technical codes to generate informed recommendations aligned with engineering standards such as EPA and CFR regulations.

- Automated Document Analysis: ENGinius streamlines review of complex Invitations to Bid (ITB) documents through contract risk assessment -- retrieving semantically similar clauses from historical data -- and change detection to identify shifts in client requirements.

- Client Letter & Deviation Report Generation: The model drafts official project correspondence by referencing previously approved documents, generating drafts aligned with project standards.

- Document Translation: ENGinius leverages its bilingual capabilities for cross-lingual translation of technical PCE documentation, handling domain-specific terminology that general translation tools frequently mistranslate.

Why It Matters

ENGinius represents a pioneering effort to bring large language model capabilities to the plant construction engineering industry, an economically significant but technically underrepresented domain in NLP research. Its contributions extend beyond a single model:

- First domain-specific LLM for plant construction: Opens the door for AI-assisted engineering workflows in an industry that handles large-scale infrastructure projects worldwide, from oil refineries to power plants. ENGinius is already actively deployed in real-world PCE operations.

- Reproducible methodology: The systematic procedures for data construction (16.5B tokens from 10 source categories) and four-stage model training provide a blueprint that can be adapted to other specialized industrial domains facing similar data scarcity challenges.

- First domain benchmarks: KOPIA (1,000 Korean MCQs) and PE (80 English certification-level questions) fill a critical gap, enabling the community to measure and compare LLM performance on plant construction tasks for the first time.

- Bilingual industrial NLP: Demonstrates how to build effective bilingual domain models using WECHSEL and LLaMA PRO, particularly relevant for Korean companies operating in global markets where technical communication spans multiple languages.

- Certification-level competency: ENGinius surpasses the PE exam passing threshold and outperforms GPT-4, demonstrating that a well-optimized 14.4B-parameter domain model can exceed much larger general-purpose models on specialized tasks.